SELU¶

-

class

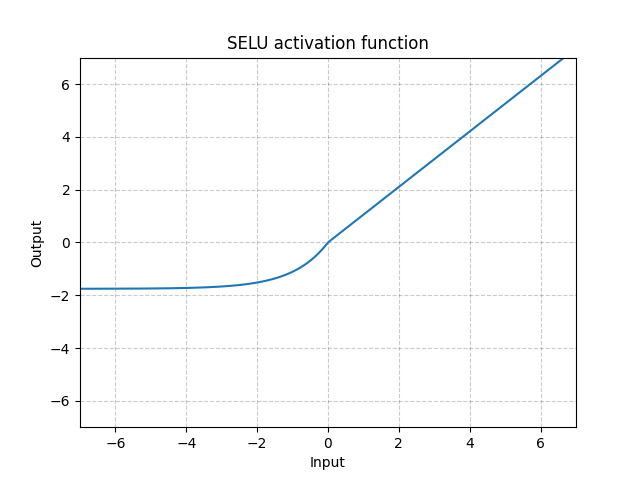

torch.nn.SELU(inplace=False)[source]¶ Applied element-wise, as:

with and .

Warning

When using

kaiming_normalorkaiming_normal_for initialisation,nonlinearity='linear'should be used instead ofnonlinearity='selu'in order to get Self-Normalizing Neural Networks. Seetorch.nn.init.calculate_gain()for more information.More details can be found in the paper Self-Normalizing Neural Networks .

- Parameters

inplace (bool, optional) – can optionally do the operation in-place. Default:

False

- Shape:

Input: , where means any number of dimensions.

Output: , same shape as the input.

Examples:

>>> m = nn.SELU() >>> input = torch.randn(2) >>> output = m(input)